We Mistake "Hasn't Failed Yet" for "Won't Fail"

May 17, 2010

AWS had four regions. US East, US West, Europe, and Asia Pacific. That was the whole cloud, more or less. Most companies running serious workloads were still asking whether they could trust it at all.

That morning, Jeff Barr published a blog post. It was short, technical, conversational in the way Jeff always was. He was announcing that RDS now had a “High Availability” option. He called it Multi-AZ. One parameter, set to true, and Amazon would spin up a hot standby in a second availability zone, synchronously replicate every write, and fail over automatically in about three minutes if the primary went down. Your application wouldn't even need to know it happened.

In 2010, high availability for a managed database meant your DBA had a plan and a phone number to call at 2am. It meant runbooks, manual failover scripts, and potentially someone driving to a data center. The notion that a piece of infrastructure could sense its own failure and reconstitute itself, invisibly, while your application kept serving traffic, was new to most of the people reading that post.

Jeff wrote that availability zones had "independent power, cooling, and network connectivity." Fourteen words that would quietly become load-bearing assumptions for an entire industry.

For the next fifteen years, those fourteen words held. Until they didn't.

***

For about sixteen years, I believed in multi-AZ the way you believe in gravity.

Every architecture review I sat in, every Well-Architected assessment, every "is this prod-ready?" Operational Readiness Review pointed to the same thing: spread your workload across availability zones and you've handled the big one. I never really questioned it, neither did the people I worked with. At some point it stopped feeling like a design decision and started feeling almost like a law of nature.

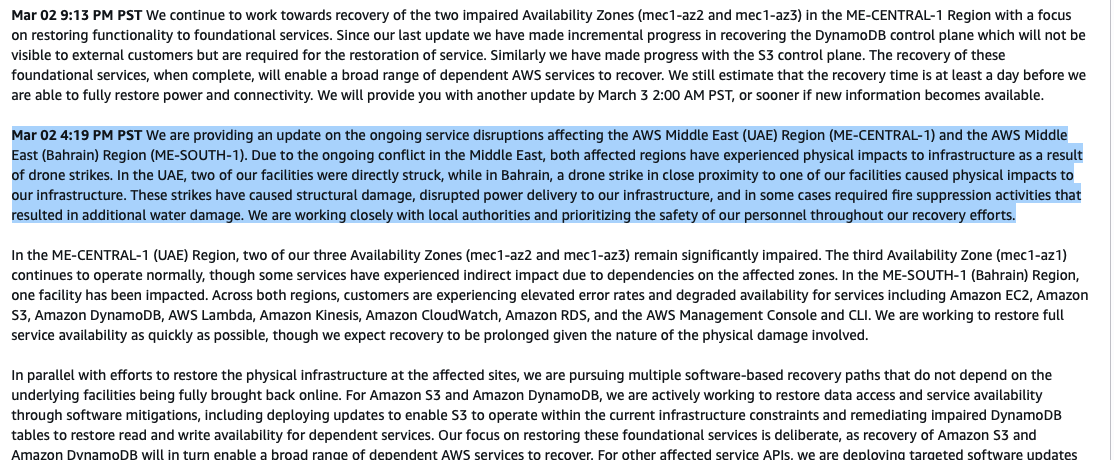

Then a single event took down two Asian regions simultaneously. And the assumption didn't bend. It shattered.

Around the same time, a different assumption cracked. Not in one dramatic moment, but across a series of events that added up to the same thing. Trump sanctioned the International Criminal Court, and Microsoft implemented those sanctions, locking the ICC's chief prosecutor out of his Outlook email. A Microsoft executive then told a French Senate committee, under oath, that the company cannot guarantee European customer data will never be handed to US authorities because the CLOUD Act requires US companies to comply with US government requests regardless of where the data physically sits. European data stored in European data centers, operated by a US company, is still subject to US law.

Two load-bearing assumptions, gone inside a few months.

***

There's a pattern here that I keep coming back to, and I think it sits at the intersection of psychology, probability, and how organizations actually work.

When an assumption holds long enough, it stops being an assumption. It becomes furniture. You stop seeing it because it was always there. And crucially, every day it holds true feels like evidence that it was always correct. The confidence compounds and the scrutiny fades.

That's a measurement error. We're tracking frequency, not fragility. The assumption isn't getting stronger with each passing day. The exposure is just accumulating quietly, out of sight.

This is what makes the prevention paradox so insidious. The longer nothing goes wrong, the more confident you feel. The more confident you feel, the less you invest in questioning the foundations. And so the fragility grows precisely because things have been going so well.

Scientists have a name for the thing that prevents this trap: falsifiability. A good scientific hypothesis is one that can, in principle, be proven wrong. You hold it provisionally. You actively look for the counterexample. The absence of failure doesn't confirm the hypothesis; it just hasn't been refuted yet.

Organizations are terrible at this because falsifiability is uncomfortable. Treating your foundational assumptions as provisional feels destabilizing. It requires admitting that what you built on might not hold. Most organizational cultures punish that kind of questioning, or at least fail to reward it.

So we get this strange situation: some of the most technically sophisticated organizations in the world run on assumptions they've never seriously stress-tested; Multi-AZ as physics, cloud providers as neutral infrastructure, and government as a stable, predictable background condition.

***

Taleb's black swan is often misread as a story about rare events but I think it's more a story about accumulated fragility. Everything that was quietly wrong beforehand, the untested assumptions, the confidence that compounded without scrutiny, the floor that was never as solid as it felt. The event just ends the period of not knowing. What follows is disorienting precisely because the ground shifted under something we stopped questioning years ago.

I think this is worth sitting with, because the reflex after a shattered assumption is usually to over-react, replace it with a new one, and move on. We update the architecture, patch the policy, and add multi-region to the checklist. And then, gradually, the new assumption starts to calcify too.

The harder question you should ask yourself now is: what are we still treating as physics that isn't?

I don't have a clean answer. But I think the practice, the actual resilience practice, is to hold your foundational assumptions more loosely. To ask periodically: if this turns out to be wrong, what breaks? To make the questioning normal rather than exceptional. To treat "hasn't failed yet" as exactly what it is: a run of confirming evidence, not proof.

That's uncomfortable and it requires a kind of epistemic humility that organizations tend to select against. But the alternative is waiting for the next black swan to do the work for you.

//Adrian